Ross: Can we act now to stop the rise of the killer robots?

Sep 17, 2019, 7:34 AM | Updated: 9:57 am

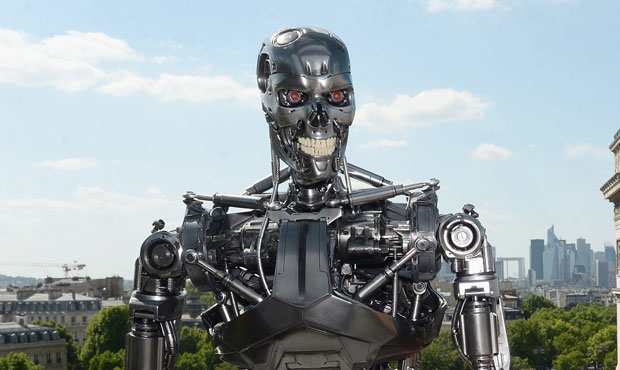

The Terminator of Hollywood fame is a killer robot programed to hunt humans. (Getty Images)

(Getty Images)

Experts in artificial intelligence — about 4,500 of them — have now joined the Campaign to Stop Killer Robots. This is unfortunately not satire.

This is a reaction to the very real plans by the United States and other countries to design tanks, and ships, and drones that would search for and destroy enemy weapons – without being actively controlled by humans. In other words, killer robots.

Ross: Who would you sell an AR-15 firearm to?

It would be a little like an AR-15 that pulls its own trigger when it sees something it hates.

Last year, a Google Engineer named Laura Nolan famously quit Google rather than work on a Pentagon project to teach drones how to rapidly identify humans. The idea of course was to make sure the drones avoid killing the humans, but Laura thinks that could easily be flipped. And this week she told the Guardian it is time for a global ban on any weapon that fires itself.

Laura Nolan, it turns out, studies Black Swan events. That’s when computers encounter conditions that no one expected.

“All of us have got horrible nasty problems in our systems,” Nolan said last year at a conference of computer scientists. “It’s just that some of us have been lucky and we don’t know it yet, or we’re not talking about it.”

So, how dumb would it be to take guns away from the mentally ill, only to trust bombs to a bunch of computers that even the people who run them don’t trust?

Ross: The felon upstairs could potentially be your own child